|

要训练tensorflow版的mobilenetssd,首先要进行搭建网络环境,本文使用的系统环境为ubuntu18.04,然后进行搭建,tensorflow环境,这里tensorflow-gpu版的环境搭建,进行简单介绍,还是很容易进行搭建的,不需要编译等复杂操作,只需要几个命令既可以,在这之前,是需要进行安装conda的。 一,环境搭建安装conda,这个可以参考其他博客,然后进行安装以下软件包 #安装

conda install cudatoolkit = 10.0 cudnn=7.6 tensorflow-gpu=1.15

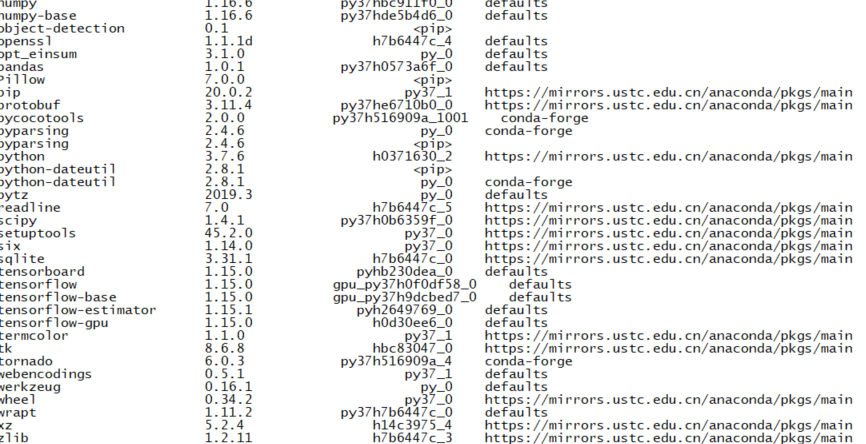

具体安装如图所示:

conda 安装的部分软件 tensorflow环境安装好后,进行制作自己的tfrecord数据集: 首先启动虚拟环境,source activate mobilenetssd 二,数据集制作准备自己的voc数据集,即xml文件和图片文件,也就是标记好的样本 新建文件 train_test_split.py将样本进行切割划分,分别为train,test,validition三部分。 训练验证集占80%,测试集占20%。训练集占训练验证集的80% 。分别存到指定文件夹下: import os

import random

import time

import shutil

xmlfilepath=r'./Annotations'

saveBasePath=r"./Annotations_save"

trainval_percent=0.8

train_percent=0.8

total_xml = os.listdir(xmlfilepath)

num=len(total_xml)

list=range(num)

tv=int(num*trainval_percent)

tr=int(tv*train_percent)

trainval= random.sample(list,tv)

train=random.sample(trainval,tr)

print("train and val size",tv)

print("train size",tr)

start = time.time()

test_num=0

val_num=0

train_num=0

for i in list:

name=total_xml[i]

if i in trainval: #train and val set

if i in train:

directory="train"

train_num += 1

xml_path = os.path.join(os.getcwd(), 'Annotations_save/{}'.format(directory))

if(not os.path.exists(xml_path)):

os.mkdir(xml_path)

filePath=os.path.join(xmlfilepath,name)

print(filePath)

newfile=os.path.join(saveBasePath,os.path.join(directory,name))

shutil.copyfile(filePath, newfile)

else:

directory="validation"

xml_path = os.path.join(os.getcwd(), 'Annotations_save/{}'.format(directory))

if(not os.path.exists(xml_path)):

os.mkdir(xml_path)

val_num += 1

filePath=os.path.join(xmlfilepath,name)

print(filePath)

newfile=os.path.join(saveBasePath,os.path.join(directory,name))

shutil.copyfile(filePath, newfile)

else:

directory="test"

xml_path = os.path.join(os.getcwd(), 'Annotations_save/{}'.format(directory))

if(not os.path.exists(xml_path)):

os.mkdir(xml_path)

test_num += 1

filePath=os.path.join(xmlfilepath,name)

newfile=os.path.join(saveBasePath,os.path.join(directory,name))

shutil.copyfile(filePath, newfile)

end = time.time()

seconds=end-start

print("train total : "+str(train_num))

print("validation total : "+str(val_num))

print("test total : "+str(test_num))

total_num=train_num+val_num+test_num

print("total number : "+str(total_num))

print( "Time taken : {0} seconds".format(seconds))

分好的样本 如上图:Annotations为xml文件,Annotations_save,为分类保存的文件,其下面有test,train,validation三个文件夹。 新建xml_to_csv.py 再进行制作csv文件, import os

import glob

import pandas as pd

import xml.etree.ElementTree as ET

def xml_to_csv(path):

xml_list = []

for xml_file in glob.glob(path + '/*.xml'):

tree = ET.parse(xml_file)

root = tree.getroot()

print(root.find('filename').text)

for member in root.findall('object'):

value = (root.find('filename').text,

int(root.find('size')[0].text), #width

int(root.find('size')[1].text), #height

member[0].text,

int(member[4][0].text),

int(float(member[4][1].text)),

int(member[4][2].text),

int(member[4][3].text)

)

xml_list.append(value)

column_name = ['filename', 'width', 'height', 'class', 'xmin', 'ymin', 'xmax', 'ymax']

xml_df = pd.DataFrame(xml_list, columns=column_name)

return xml_df

def main():

for directory in ['train','test','validation']:

xml_path = os.path.join(os.getcwd(), 'Annotations_save/{}'.format(directory))

xml_df = xml_to_csv(xml_path)

xml_df.to_csv('./my.csv', index=None)

#xml_df.to_csv('/home/lijingle/sdb/mobilenetssdtrafficlight_{}_labels.csv'.format(directory), index=None)

print('Successfully converted xml to csv.')

main()

最后制作tfcord文件,新建文件 generate_tfrecord.py #!/usr/bin/env python3

# -*- coding: utf-8 -*-

"""

Created on Tue Mar 5 15:28:55 2019

@author: z

"""

"""

Usage:

# From tensorflow/models/

# Create train data:

python generate_tfrecord.py --csv_input=data/tv_vehicle_labels.csv --output_path=train.record

# Create test data:

python generate_tfrecord.py --csv_input=data/test_labels.csv --output_path=test.record

"""

import os

import io

import pandas as pd

import tensorflow as tf

from PIL import Image

from object_detection.utils import dataset_util

from collections import namedtuple, OrderedDict

/*保存文件路径*/

os.chdir('/home/lijingle/deep_work/2Dimage/mobilenetssd/models/research/')

flags = tf.app.flags

flags.DEFINE_string('csv_input', '', 'Path to the CSV input')

flags.DEFINE_string('output_path', '', 'Path to output TFRecord')

FLAGS = flags.FLAGS

# TO-DO replace this with label map

def class_text_to_int(row_label):

# 你的所有类别

if row_label == 'person':

return 1

else:

return None

def split(df, group):

data = namedtuple('data', ['filename', 'object'])

gb = df.groupby(group)

return [data(filename, gb.get_group(x)) for filename, x in zip(gb.groups.keys(), gb.groups)]

def create_tf_example(group, path):

with tf.gfile.GFile(os.path.join(path, '{}'.format(group.filename)), 'rb') as fid:

encoded_jpg = fid.read()

encoded_jpg_io = io.BytesIO(encoded_jpg)

image = Image.open(encoded_jpg_io)

width, height = image.size

filename = group.filename.encode('utf8')

image_format = b'jpg'

xmins = []

xmaxs = []

ymins = []

ymaxs = []

classes_text = []

classes = []

for index, row in group.object.iterrows():

xmins.append(row['xmin'] / width)

xmaxs.append(row['xmax'] / width)

ymins.append(row['ymin'] / height)

ymaxs.append(row['ymax'] / height)

classes_text.append(row['class'].encode('utf8'))

print(classes_text)

classes.append(class_text_to_int(row['class']))

print(classes)

tf_example = tf.train.Example(features=tf.train.Features(feature={

'image/height': dataset_util.int64_feature(height),

'image/width': dataset_util.int64_feature(width),

'image/filename': dataset_util.bytes_feature(filename),

'image/source_id': dataset_util.bytes_feature(filename),

'image/encoded': dataset_util.bytes_feature(encoded_jpg),

'image/format': dataset_util.bytes_feature(image_format),

'image/object/bbox/xmin': dataset_util.float_list_feature(xmins),

'image/object/bbox/xmax': dataset_util.float_list_feature(xmaxs),

'image/object/bbox/ymin': dataset_util.float_list_feature(ymins),

'image/object/bbox/ymax': dataset_util.float_list_feature(ymaxs),

'image/object/class/text': dataset_util.bytes_list_feature(classes_text),

'image/object/class/label': dataset_util.int64_list_feature(classes),

}))

return tf_example

def main(_):

writer = tf.python_io.TFRecordWriter(FLAGS.output_path)

path = os.path.join(os.getcwd(), '/home/lijingle/sdb/JPEGImages_p_train')

examples = pd.read_csv(FLAGS.csv_input)

grouped = split(examples, 'filename')

num = 0

for group in grouped:

num += 1

tf_example = create_tf_example(group, path)

writer.write(tf_example.SerializeToString())

if (num % 100 == 0): # 每完成100个转换,打印一次

print(num)

writer.close()

output_path = os.path.join(os.getcwd(), FLAGS.output_path)

print('Successfully created the TFRecords: {}'.format(output_path))

if __name__ == '__main__':

tf.app.run()

制作 _tfrecord 文件时需要用到一下命令: python generate_tfrecord.py --csv_input=/home/lijingle/sdb/mobilenetssd/my.csv --output_path=./trafficlight_train.tfrecord

##注意csv文件路径为绝对路径

至此对tfrecord文件制作完成。 参考:https://www.cnblogs.com/gezhuangzhuang/p/10613468.html

|